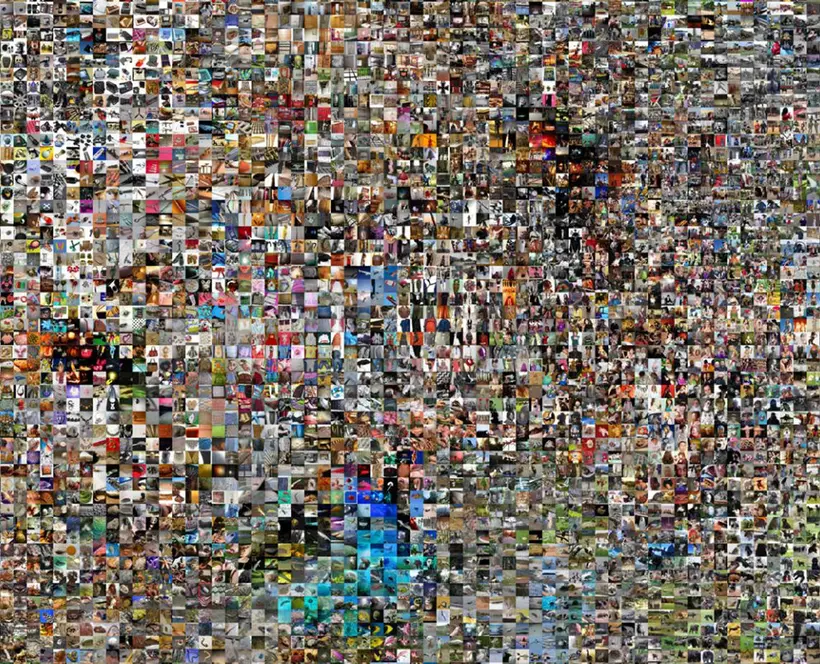

Philipp Schmitt’s Declassifier uses a computer vision algorithm trained on COCO, an image dataset developed by Microsoft in 2014. In the work, photographs from Schmitt’s series “Tunnel Vision” are tested and overlaid with images from which the algorithm learned in the first place. The generated collages unfold over the duration of the project, showing all COCO images that contributed to a given classification. If a car is identified in one of Schmitt’s photographs, all the cars included in the dataset that trained the algorithm surface on top of it.

Taking photographs to be tested on the algorithm challenge Schmitt’s way of looking: “I take photos for a computer “audience” first. The human viewer is secondary. I shoot matter-of-fact, with little occlusion, as too much complexity will confuse the algorithm. It’s challenging to still take pictures that I myself find interesting.”

This process makes Schmitt consider the underlying power relationships in algorithmic photography. Neither the photographers whose images were scrapped from the internet to create COCO nor the people portrayed in the streets by Schmitt gave their approval for their images to be used.

Declassifier exposes the myth of magically intelligent machines; The data by which machine learning algorithms learn to make predictions is hardly ever shown, let alone credited. By doing both, Schmitt highlights instead the diverse photographic sources that is made use of.

Declassifier is commissioned from an international open call for Data / Set / Match, a programme that seeks new ways to present, visualise and interrogate contemporary image datasets. To coincide with the work, Schmitt has written a new text on Unthinking Photography that looks into what a computer vision algorithm such as COCO recognises in a photograph.

Biography

Philipp Schmitt is an artist, designer, and researcher based in Brooklyn, NY. His practice engages with the philosophical, poetic, and political dimensions of computation by examining the ever-shifting discrepancy between what is computable in theory and in reality. His current work addresses notions of opacity, and the automation of perception in artificial intelligence research.